If you’re searching for a clear, up-to-date look at the evolution of computer hardware, you’re likely trying to understand how rapid technological shifts impact performance, compatibility, security, and future investments. From room-sized mainframes to AI-powered edge devices, hardware advancements have transformed not only computing power but also how we work, communicate, and innovate.

This article breaks down the key milestones, breakthrough components, and emerging trends shaping modern systems—without overwhelming you with unnecessary jargon. We focus on what matters most: processing power, storage innovation, energy efficiency, connectivity, and how these changes influence today’s smart devices and digital infrastructure.

Our insights are grounded in ongoing analysis of hardware releases, semiconductor advancements, cybersecurity implications, and real-world performance benchmarks. By combining technical research with practical application, we provide a reliable, forward-looking perspective.

Whether you’re a tech enthusiast, IT professional, or curious learner, this guide will help you understand where computer hardware has been—and where it’s heading next.

From Room-Sized Calculators to Pocket Supercomputers

In 1945, ENIAC weighed 30 tons and used vacuum tubes—glass components that controlled electricity like primitive switches. Today, your smartphone outperforms it while fitting in your pocket. So what changed? The evolution of computer hardware moved from bulky tubes to transistors (tiny semiconductor switches), then to integrated circuits, which pack millions of transistors onto a single chip. As a result, devices became faster, smaller, and cheaper. Understanding this progression helps you judge new trends, from modern AI chips to quantum computing—machines that use qubits instead of bits. Think less room-sized monster, more sci-fi superpower.

The Age of Giants: Vacuum Tubes and the Birth of Computing

As we’ve witnessed remarkable advancements in computer hardware over the years, ensuring that these powerful machines are connected through a secure home Wi-Fi network has never been more essential in today’s digital landscape – for more details, check out our Step-by-Step Guide to Setting Up a Secure Home Wi-Fi Network.

In the 1940s and 1950s, computers ran on vacuum tubes—glass devices that controlled electrical flow and acted as primitive switches. Think of them as oversized light bulbs flipping between on and off states (and burning out just as dramatically). This era marked the beginning of the evolution of computer hardware.

Consider ENIAC vs. your laptop:

- Size: ENIAC filled a room; your laptop fits in a backpack.

- Power Use: ENIAC consumed about 150 kilowatts (U.S. Army data); modern laptops sip power.

- Reliability: ENIAC used roughly 18,000 tubes, with frequent failures (Computer History Museum).

Supporters argue these machines were revolutionary—and they were. But critics point out the pain: constant tube replacements, intense heat, and programming through punch cards and physical rewiring.

Setup wasn’t plug-and-play; it required entire teams to configure switches and monitor operations. Compared to today’s instant boot-ups, it was closer to launching a spaceship than opening an app.

The Transistor Leap: Miniaturization and Reliability

In 1947, Bell Labs unveiled the transistor—a breakthrough many call the birth of modern electronics. That’s accurate, but also understated. The transistor didn’t just improve machines; it redefined what machines could be.

Vacuum tubes had powered early computers, yet they were bulky, fragile, and notoriously power-hungry (and yes, they burned out like cheap light bulbs). Transistors changed the equation:

- Smaller size, enabling compact circuit design

- Lower power consumption, reducing heat and cost

- Greater reliability, minimizing constant failures

- Faster switching speeds, accelerating computation

Here’s the contrarian take: people often romanticize vacuum-tube computers as heroic pioneers. In reality, they were technological bottlenecks. Without transistors, the so-called evolution of computer hardware would have stalled.

Transistors fueled second-generation computing, shrinking machines into affordable “minicomputers.” Suddenly, universities and businesses—not just governments—could harness digital power. That democratization, not just miniaturization, was the true revolution.

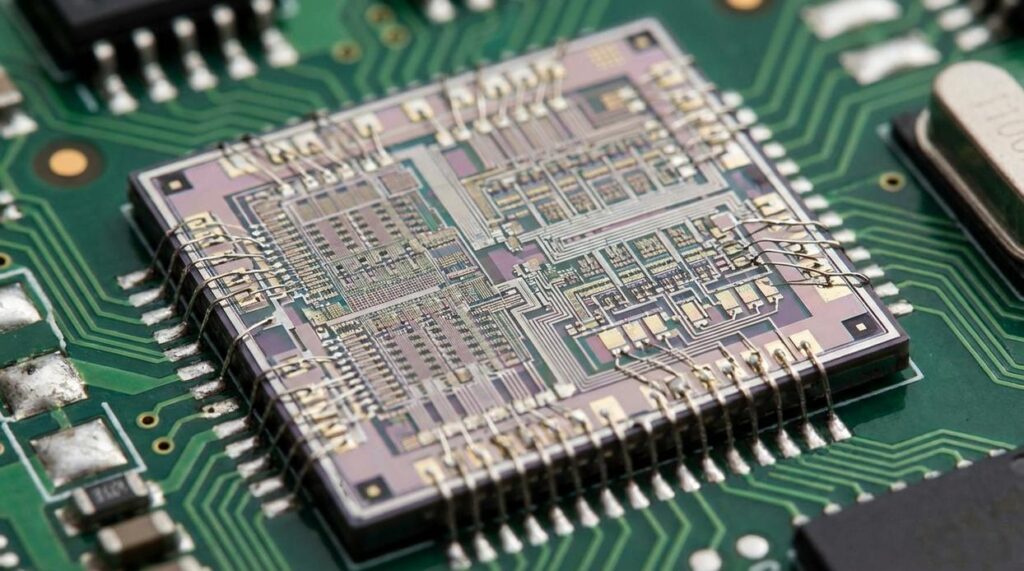

The Integrated Circuit: Placing an Entire Computer on a Chip

At first, computers filled rooms with a low electric hum, warm air drifting from refrigerator-sized cabinets. Then came the integrated circuit (IC)—a tiny slice of silicon that embedded multiple transistors (microscopic electronic switches that control current) onto a single chip. Instead of wiring components by hand, engineers etched pathways so fine they looked like metallic spiderwebs under a microscope. This leap marked a defining chapter in the evolution of computer hardware.

Then, in 1971, the Intel 4004 arrived. About the size of a fingernail, it was the first commercially available microprocessor—a complete central processing unit (CPU), meaning the “brain” of a computer, on one chip. You could almost feel the shift in the air: computing power shrinking, costs falling, possibilities expanding.

As a result, the 1970s ignited the PC boom. Machines like the Apple II and later the IBM PC moved computing from sterile labs into living rooms and small offices, their plastic casings clicking softly under eager fingertips.

Some argue mainframes were more powerful—and they were. However, on-chip processing enabled localized computing, an early step toward more secure data handling compared to shared time‑sharing systems. For deeper context, see .

Riding the Exponential Curve: Moore’s Law, Graphics, and Storage

Moore’s Law is the observation that the number of transistors on a microchip doubles roughly every two years, leading to exponential performance gains. Exponential means growth that accelerates over time, not just steady improvement. This predictable pattern helped businesses plan upgrades and consumers expect faster devices regularly (Intel, 1965). If your laptop feels dramatically faster than one from a decade ago, this is why.

But raw speed wasn’t enough. Enter the GPU (Graphics Processing Unit)—a specialized chip designed to render complex 2D and 3D images. Instead of forcing the CPU (Central Processing Unit) to handle everything, GPUs process many calculations simultaneously, powering modern gaming and 3D design. Think Pixar-level visuals instead of blocky 90s polygons (yes, we’ve come a long way from early PlayStation graphics).

Storage saw its own leap. HDDs (Hard Disk Drives) use spinning disks, while SSDs (Solid-State Drives) use flash memory with no moving parts. The result? Faster boot times, quicker file access, and fewer mechanical failures (Samsung, 2023). Pro tip: upgrading to an SSD often delivers the most noticeable speed boost.

Finally, instead of endlessly increasing clock speed, manufacturers adopted multi-core processors—multiple processing units on one chip—to improve multitasking. This shift defines the evolution of computer hardware today.

Start with an anecdote about upgrading my sluggish phone. I remember swapping a battery, expecting magic, but the real leap came from its new System-on-a-Chip. A System-on-a-Chip (SoC) integrates the CPU, GPU, memory, and radios into one power-efficient package—think Avengers-level teamwork. This hardware consolidation, central to the evolution of computer hardware, fuels smartphones, tablets, and IoT sensors. Some argue discrete chips offer flexibility, and they do, for desktops. But in pockets, efficiency wins. The AI hardware race adds TPUs and NPUs—specialized accelerators built for neural networks’ massive workloads. Smaller, smarter, faster became tangible. Table below shows:

|Part|Role|

|CPU|Logic|

Snapshot.

The story of computing reads like a sci‑fi montage: vacuum tubes, clunky yet revolutionary, giving way to transistors, then microprocessors, and finally SoCs powering phones. This evolution of computer hardware follows one relentless theme—smaller, faster, more efficient without slowing down.

- Vacuum tubes lit the spark.

- Transistors shrank the room.

- Microprocessors put a brain on a chip.

- SoCs tucked entire systems into your palm.

From streaming superhero sagas to navigating with GPS, every swipe depends on this march forward (yes, even your late‑night memes). Next up: quantum leaps, neuromorphic designs, and hardware straight out of Star Trek

Stay Ahead of What’s Next in Tech

You came here to better understand the evolution of computer hardware and how it impacts your devices, data, and daily digital experience. Now you have a clearer view of how processing power, storage, connectivity, and smart integrations are rapidly transforming the way we work and live.

But here’s the reality: technology doesn’t slow down. If you’re not keeping up, you risk investing in outdated devices, overlooking security vulnerabilities, or missing out on performance gains that could save you time and frustration.

Staying informed isn’t just about curiosity—it’s about staying efficient, secure, and competitive in a world driven by constant innovation.

If you want real-time tech evolution alerts, practical setup guidance, and trusted insights that cut through the noise, now’s the time to act. Join thousands of readers who rely on our expert-backed updates to stay ahead of the curve. Subscribe today and make smarter tech decisions with confidence.

Head of Digital Insights & Security

Tamara Strongivers has opinions about digital innovations and concepts. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Digital Innovations and Concepts, Interactive Tech Setup Guides, Knowledge Vault is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Tamara's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Tamara isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Tamara is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.

Head of Digital Insights & Security

Tamara Strongivers has opinions about digital innovations and concepts. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Digital Innovations and Concepts, Interactive Tech Setup Guides, Knowledge Vault is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Tamara's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Tamara isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Tamara is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.